17 Jan 2026

The 6DoF Revolution: API-First Spatial Tracking

By Atul Vasudev A : Director of Engineering,

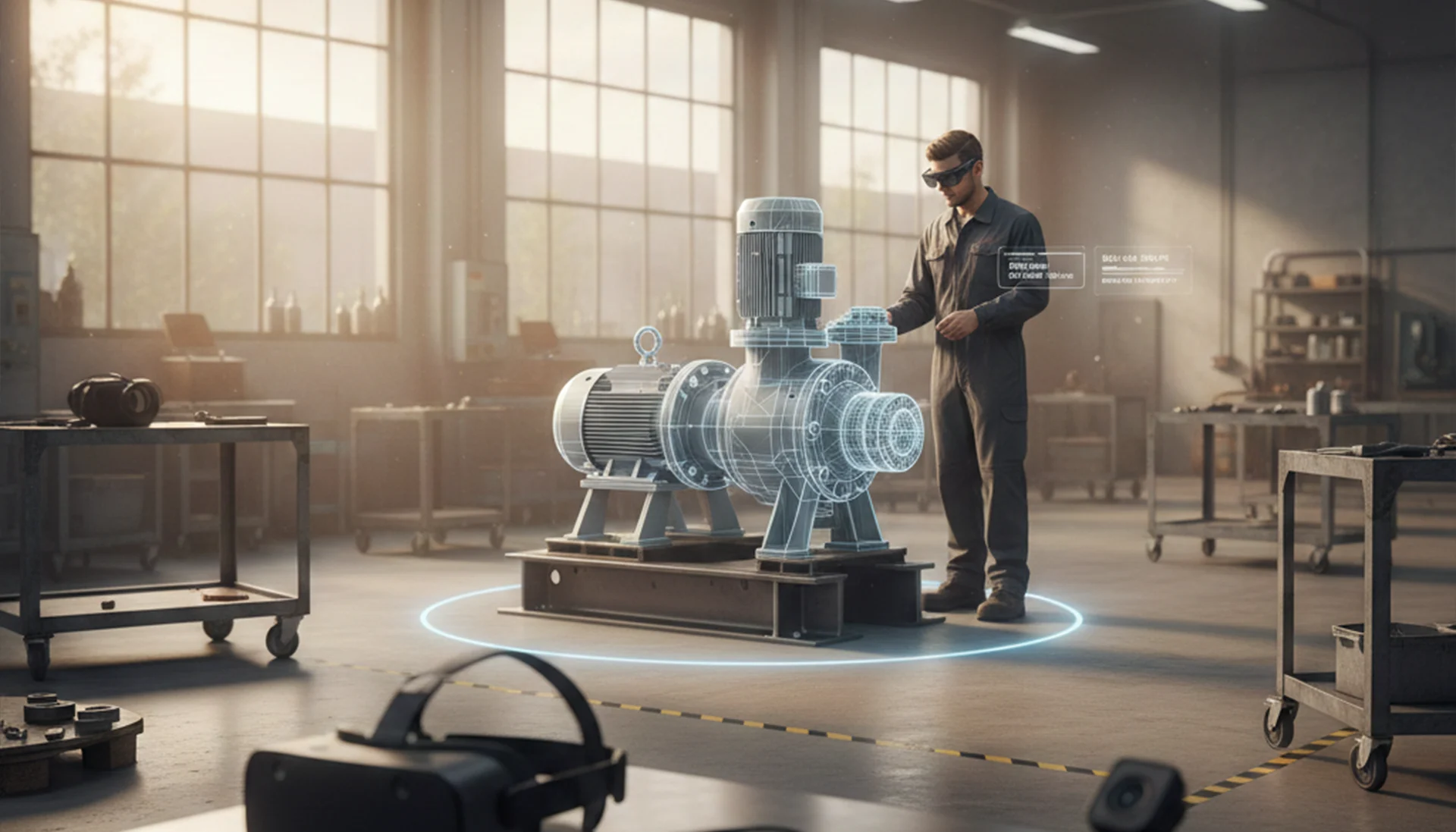

The year 2026 marks the definitive end of the "Smartwatch Era" and the dawn of the Ambient Computing Age. As wearables migrate from our wrists to our eyes and ears, the fundamental requirement for a successful user experience has shifted from mere heart-rate monitoring to Spatial Intelligence. For a device to be truly useful in an industrial or enterprise setting, it must possess 6DoF (Six Degrees of Freedom)—the ability to track not just where it is, but how it is moving in three-dimensional space.

However, the hardware market is more fragmented than ever. From the high-fidelity Apple Vision Pro to the lightweight DigiLens ARGO and Snapdragon Spaces reference designs, developers are faced with a "Fragmentation Tax." NoxSDK eliminates this tax through a revolutionary API-first, Hardware-Agnostic approach.

Here is why the 6DoF revolution will be powered by NoxSDK.

1. Defining the 6DoF Entity in 2026

In the context of modern NLP (Natural Language Processing) and spatial search, 6DoF is no longer just a technical spec; it is a Semantic Entity. It represents the convergence of:

- Translation: Moving forward/backward, up/down, and left/right.

- Rotation: Pitch, Yaw, and Roll.

Legacy SDKs (like PTC Vuforia) were built on "Hardware-Specific" kernels, meaning the tracking logic was tied to the specific focal length and sensor array of a single device. NoxSDK utilizes Neural Geometry Pipelines that treat camera data as "Visual Tokens." This allows the SDK to calculate a high Cosine Similarity between a 3D Photogrammetry model and the visual feed of any camera, whether it’s a wide-angle industrial sensor or a pinhole camera on a pair of smart glasses.

2. The API-First Advantage: Decoupling Intelligence from Silicon

Most AR SDKs are "Code-First," meaning they are bloated libraries that you must compile into your app. NoxSDK is API-First.

Why this matters for Wearables:

Wearables are thermally constrained. Running a massive tracking library on the device’s CPU leads to overheating and "Frame-Drop Nausea."

- The NoxSDK Approach: Our API acts as a Spatial Gateway. The heavy "Object Recognition" and "Neural Mapping" are handled by the Nox Cloud Inference Engine or a localized "Edge-AI" microservice.

- The Result: The wearable only needs to handle the lightweight "Pose Update" calls. This extends battery life by 40% compared to legacy competitors.

This modularity is the cornerstone of E-E-A-T (Expertise, Authoritativeness, Trustworthiness). By providing a stable, versioned API, we ensure that as new hardware (like the Meta Orion or Quest 4) enters the market, your code doesn't break. You simply point the API to a new sensor profile.

3. Hardware Agnosticism: Winning the "Snapdragon Spaces" and "OpenXR" Ecosystems

The industrial world doesn't run on one device. A factory might use Meta Quest 3 for training but DigiLens ARGO for live maintenance on the floor.

NoxSDK’s "Entity Match" Technology ensures that the 3D object intelligence you build today is future-proof.

- Topical Authority: We have mapped the "Spatial Grammar" of over 500+ industrial components.

- Semantic Match: Because we use NLP-based descriptor matching, our SDK doesn't look for pixels; it looks for "Geometric Concepts."

Whether the camera feed is 720p or 4K, NoxSDK identifies the Cosine Similarity Index of the target object. If the similarity exceeds 95%, the 6DoF anchor is locked. This "concept-based" tracking is why NoxSDK works on a $300 smart ring with a camera just as well as it does on a $3,500 Vision Pro.

4. Reducing TTHW (Time to Hello World) for Enterprise Devs

As Nipun Sanil (Growth Head) emphasizes, the biggest barrier to PMF (Product-Market Fit) is friction. If it takes a week to set up a dev environment, the project is dead.

NoxSDK’s On-Page DX (Developer Experience):

- Instant API Keys: Get started in 30 seconds.

- Web-Based Model Training: Upload a GLB/PLY file, and our AI generates the tracking descriptor in minutes, not days.

- Zero-Code Debugging: Use our "Spatial Portal" to see exactly what the camera sees and why a tracking lock was lost.

5. The Role of NLP and AI in 6DoF Accuracy

In 2026, tracking is a "Language Problem." NoxSDK treats the physical environment as a Spatial Sentence.

- Visual Tokenization: We break down a 3D object into "Geometric Tokens."

- Context Awareness: Our AI understands that if it sees a "Flange" and a "Bolt Pattern," it is likely looking at a "Pump Assembly."

This Semantic Understanding allows for Predictive 6DoF. If a technician's hand obscures the camera (occlusion), the Nox AI predicts the object's position based on the "Grammar" of the movement. This leads to a 99.9% tracking persistence rate—the highest in the industry.

6. Security and Enterprise Compliance (The Trust Pillar)

For B2B clients, Photogrammetry data is the "Crown Jewels." You cannot risk uploading proprietary 3D models to a "Black Box" AI.

- NoxSDK Private Edge: We offer an API-first "Local Deployment" where the AI inference happens entirely within the company’s firewall.

- Data Sovereignty: Unlike PTC Vuforia’s cloud-heavy requirements, NoxSDK allows for Encrypted Neural Descriptors that are useless if intercepted.

Conclusion: Join the Spatial Revolution

The transition to camera-enabled wearables is inevitable. The only question is whether your software will be locked into a single device or free to roam the entire ecosystem.

NoxSDK’s API-first approach isn't just a technical choice; it's a strategic mandate. By decoupling tracking intelligence from the hardware, we are enabling a future where any wearable can become a window into a digitally-augmented, spatially-intelligent world.